WILDME CASE STUDYDuration: Aug 2023 - Jan 2024

Role: UX Designer

Focus: User Research, User Interviews, Prototyping

Employer: Carnegie Mellon University Software Engineering Institute

Tools: Figma

I worked as a UX Designer with CMU's SEI on a team researching eXplainable AI (XAI) and human-AI interaction. We partnered with the nonprofit WildMe to develop a process for AI practitioner teams to implement XAI in real-world systems.

project overview

Over the course of this semester, I have been working as a UX Designer with CMU’s Software Engineering Institute with a team specialized with human-AI interaction and XAI (eXplainable AI). Our goal is to develop a process for AI practitioner teams to implement XAI in real-world systems and we tested this through a case study with WildMe.

case study timeline

I worked with the team from August 2023 to January 2024, most significantly on the need-finding and prototype-building phases.

what is wildme?

WildMe is a nonprofit that develops AI tools for collaborative wildlife conservation. Their mission is to scale wildlife research and support conservationists by providing multi-feature techniques, speed, and accuracy in animal monitoring through AI-powered computer vision, replacing hours of human labor with minutes of computation to combat the ongoing sixth mass extinction.

For this case study, we worked specifically on the Seal Codex, AI for seals, and Flukebook, AI for whales and dolphins, platforms.

how it works

seal codex

Phase 1: Data Collection

Researchers upload images of their seal sightings.

Phase 2: Detection

Codex uses a computer vision algorithm to identify individuals (called an annotation).

case study goals

Follow our process and gain understanding of challenges and opportunities for XAI

Build, evaluate, productionize, and maintain effective explanations for end-users

Share the exact process that we followed with the Wild Me team

Phase 3: Identification

State-of-the-art machine learning algorithms group these annotations by individual.

flukebook

Researchers can use this data to estimate populations, publish research, and ultimately shape public policy.

Phase 1: Data Collection

Researchers upload images of their dolphin or whale sightings.

Phase 2: Detection

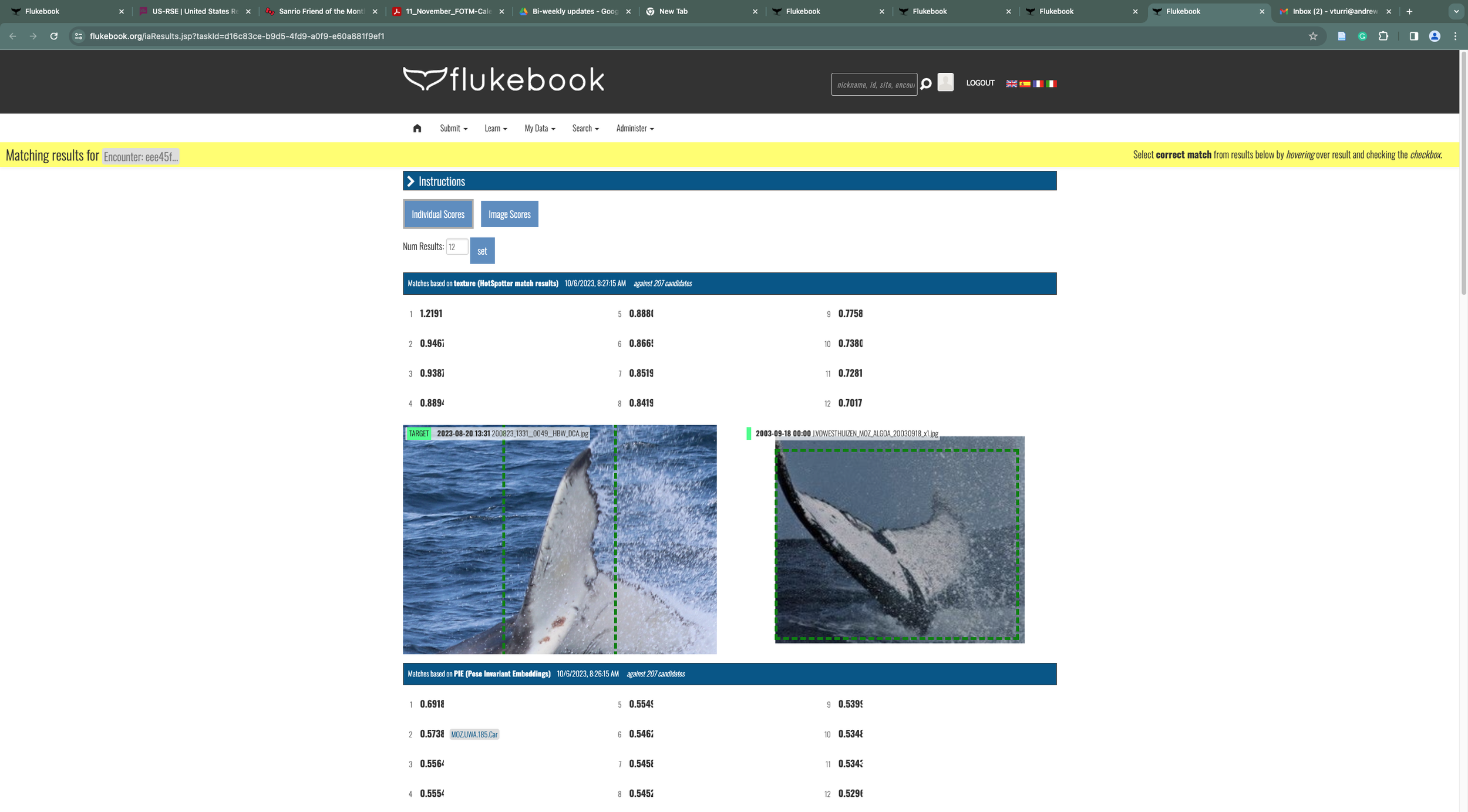

Trained computer vision finds individual whales and dolphins in the photos and identifies the species.

Phase 3: Identification

Algorithms and neural networks identify the animals through unique body coloration or fin edges.

Researchers can use this data to track population dynamics, modeling size and migration to generate new insights and support rapid, data-driven conservation action.

We are specifically working on the individual matching process of both platforms and how we can bring more transparency and explainability to the interface.

research

irb-approved interviews

My first task was developing an interview guide we could use on end-users. The research method we used was a semi-structured interview and think-aloud style. We started by writing a general list of questions and I focused mainly on XAI questions. I wrote these based on the article, “Question-Driven Design Process for Explainable AI User Experiences”. Here are some of the questions:

What do you think the confidence scores are trying to communicate to you? Is there something that could be done to improve that communication?

Are there any transparency techniques that you know are available to you that you are not using? Why do you not use them? What changes would you like to see with those techniques?

What visual/interactive elements could be incorporated to enhance your understanding of AI decisions?

What kind of error messages/notifications should be provided to you when the AI encounters limitations or uncertainty?

forums

To further familiarize myself with the technology and how AI is currently used within it, I read through Wildme’s community forums to learn about improvements, issues, and opinions users have. We sorted these into a board on Miro for easier visualization.

System Components:

Research goals, e.g. estimating population density of beluga whales in location X

High-level goals for using the system, e.g. we want to speed up our individual identification process

Metadata input process, e.g. inputting the location and species for the image

Bulk upload process, e.g. trying to upload 200 photos and system stalls

Individual matching process

Heat maps interface

Confidence scores interface

Functional: The primary job that needs to be done

Social: How people believe others perceive them

Emotion: The emotions a person experiences

in summary…

users want:

insight into how the matching process works - clear documentation

more explanation with error messages

to feel confident that the WildMe platform is matching animals correctly

to understand the algorithms and how those algorithms can fulfill their needs

interview guide

We separated the interview into learning goals and grouped questions under the learning goals, using certain visuals/images to help the interviewee recall. After completing the guide, we sent it to the IRB for approval (which we got). Here is an example of a section in our interview guide.

learning goal: we want to understand how people interact with the XAI system and what potential enhancements could promote better comprehension and trust in AI decision-making.

activity: show the Figma Codex heat maps by sharing prototype with interviewees, open, and share screen

time: 10 minutes

script:

What do you like and dislike about the current heatmap to give insight into the prediction?

How often would you refer to the heatmap when making matches?

Is there any information that you would like it to provide that it currently is not?

What would you like to change about the current design of it?

Do you use the confidence scores? What reasons do you use them for?

What do you think the confidence scores are trying to communicate to you? Is there something that could be done to improve that communication?

What kind of error messages/notifications should be provided to you when the AI encounters limitations or uncertainty?

What visual/interactive elements could be incorporated to enhance your understanding of AI decisions?

conducting interviews

We selected interviewees by posting a pinned message from administrators in the WildMe community forum asking for participants in our study to improve the WildMe platforms. We received interest and scheduled interviews with a group of 21 people, made up of researchers and divers who have been using WildMe for various reasons.

Our interviews were conducted in early November over Zoom and with a prototype that I created for Seal Codex. One of our tasks in the interview is for the user to walk us through their process of making a match in Codex. For this, I was tasked with making an interactive prototype that the participant could use to demonstrate their process. At this stage, we were not focused on improving the UI of the interface but rather the explainability and experience of it.

synthesizing interviews

We synthesized the interviews in Miro, sorting quotes into emotional, functional, and social values, and by which aspect of WildMe platforms they referred to.

findings

user needs some sort of signal that something is happening on the backend and they need to wait

signal that the system is working and it isn't broken or frozen

signal for how long the process might take

users need better way to see the results from all the models

insight into which images are identified by all models

insight into which models perform better or worse

discoverability of the saliency maps is poor - it is unclear to users what it does and where this feature lives

fixing mistakes process also has poor discoverability

there needs to be a clear way to communicate how to un-assign a match after assigned

building prototypes

My final task of this project was building more Flukebook prototypes that could be used for further testing. My team asked me to prototype different methods for integrating behavior descriptions into the interface and displaying potential matches. Some ideas I had for integrating behavior descriptions into the Seal Codex interface were:

icons

text blurbs

key words

pop-up windows

The main purpose of these is to explain the different algorithms and how the matching score is calculated using AI. Additionally, users had issues with discoverability, so we wanted these behavior descriptions to catch users’ attention. Also, some algorithms are less accurate for some types of photos, and this is an important point to make clear to users - that the computer vision Codex uses is not always 100% accurate. This helps increase the transparency of the AI.

To display potential matches in a more concise way, I put them into a dashboard-style view where the user can go between matches instead of having to scroll up and down.

I made two versions of prototypes at first; one that explicitly states the behavior descriptions on the screen with bolding and one that hides them in a pop-up window represented with an icon and bolding of key phrases (both with the new dashboard view).

explicit behavior descriptions

pop-up behavior descriptions

original flukebook interface

full prototype of 2nd design

although my work on this project ended here, these designs were continually iterated on and used in later stages.